|

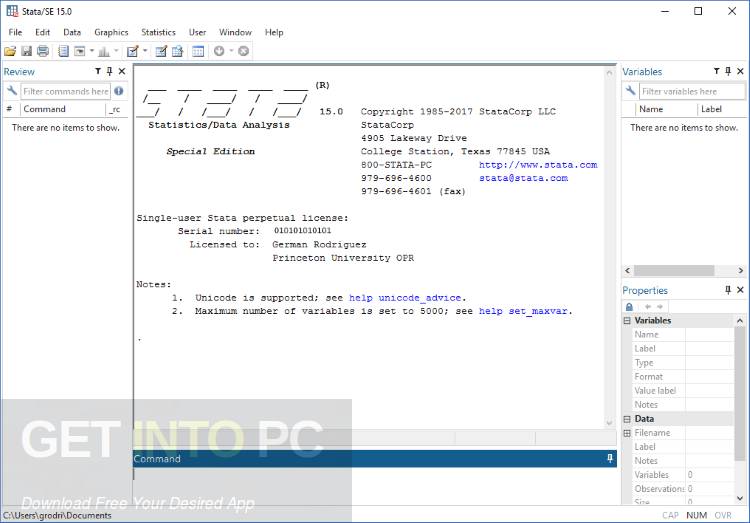

6/5/2023 0 Comments Set memory stata 13

Data this size will most likely come ( extracted) from some SQL database, and will already follow some structure.

Now let's focus on large files that span 1-60 GB (from personal experience). Usually big data already comes with big tools you wouldn't be installing some piece of software to handle "big data" on your standalone machine. I'm Apache Spark dev certified, and the work load that requires tools like Hadoop/Spark are usually distributed data sets in the range of 100GB+. The definition varies wildly across people (and industry), but if your data set is less than 40 GB in size, I'd be apprehensive about calling it "big data". I've got to nitpick at the term "big data".

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed